machine learning

Cranmer to lead campus RISE-AI Collaboration HQ

Dark matter and pencil jets: The search for a low-mass Z’ boson using machine learning

This story, featuring physics grad student Abhishikth Mallampalli, was originally published by the CMS collaboration

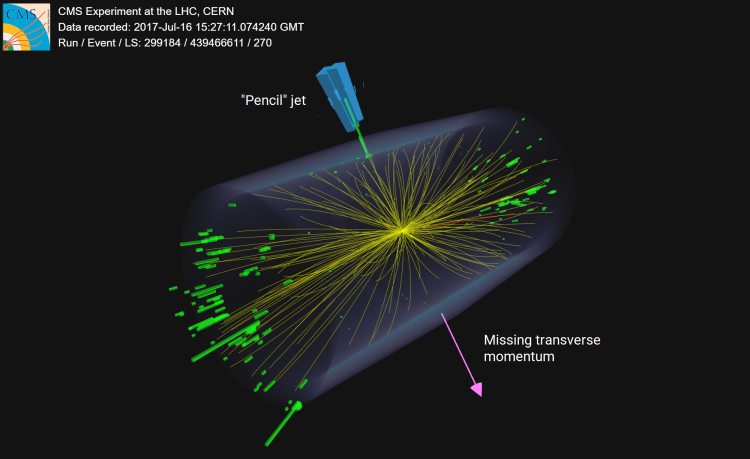

The CMS experiment conducts the first search for dark matter particles produced in association with an energetic narrow jet—the pencil jet.

Dark matter remains one of physics’ greatest mysteries. Despite making up about 27% of the universe’s energy content, its true nature is unknown. Astonishingly, all ordinary matter—which includes stars, planets, our phones, the wires transferring data, the waves carrying WiFi, you and me—accounts for just 5% of the total energy content of our universe. If our known world is so diverse, the dark sector, which outweighs it 20-to-1, could be just as rich. At CERN’s CMS experiment, scientists are searching for dark matter particles, aiming to reveal their interactions and revolutionize our understanding of our universe.

But if dark matter is so abundant, why haven’t we detected it? As the name suggests, dark matter does not interact with light (electromagnetism) with the same strength as ordinary matter (the behavior of which is explained by the Standard Model) and, so far, is only known to interact with known particles through gravity, the weakest of the four known fundamental forces. At CMS, scientists use momentum conservation to infer the presence of dark matter: missing momentum in particle collisions (after accounting for detector mismeasurements) could signal an invisible particle, possibly dark matter, slipping away undetected.

In addition to particles that make up dark matter, there could be as yet undetected particles that mediate interactions between the dark particles and the matter particles. These are creatively called, you guessed it, mediators. Such mediators are bosons, implying that they carry integer spin quantum numbers as opposed to fermions (e.g. electrons) which have half-integer spins. One such mediator is the hypothesized Z’ boson, which is electrically neutral and has spin quantum number of 1.

Typical CMS searches focus on heavy Z’ particles in the hundreds of GeV to TeV range, but a lighter Z’ boson could also exist in the dark sector. It is typically a lot more challenging to look for such light particles due to the overwhelming background from hadronic resonances and quantum chromodynamics (QCD) processes—related to the strong nuclear force—which are poorly modeled at low energies. This is where techniques like data augmentation and machine learning can be utilized, enhancing sensitivity to Z’ decays while suppressing known background processes.

The Z’ boson mass that we are looking for in this search is around 1 GeV, and because of the low mass and high boost (momentum) of the Z’ boson, it can only decay to light quarks (u, d, s), which then hadronize to form a jet (a spray of particles) with a lower number of constituents than usual. We then look for dark matter recoiling against such a narrow jet (called a pencil jet). This is the first search at the LHC for this signal. Various selections are applied to reduce the background processes while retaining the signal process and a combination of neural networks and boosted decision trees are used to further extract the signal. Multiprocessing techniques are used to speed up the processing time of the events.

“The main challenge in this analysis of real-world data was that the physically motivated input features aren’t typically well modeled in our simulations and so we had to take steps to ensure model robustness. We showed that using machine learning can help us achieve up to 10 times more sensitivity to these rare signal processes compared to traditional strategies” says Abhishikth Mallampalli, a graduate student at the University of Wisconsin-Madison, leading this search. Statistical hypothesis testing is used to determine whether the observed data agrees with the standard model prediction or suggests the presence of a dark matter signal.

We see that the data agrees well with the standard model expectation across the three years of proton-proton collision data analyzed. While this means that such a Z’ boson with the probed light masses might not exist in our universe at the 95% confidence level, null results in such searches for dark matter not only solidify the standard model but also serve as guidance to theorists in building new physics models for dark matter, and help experimentalists to identify the direction for future searches.

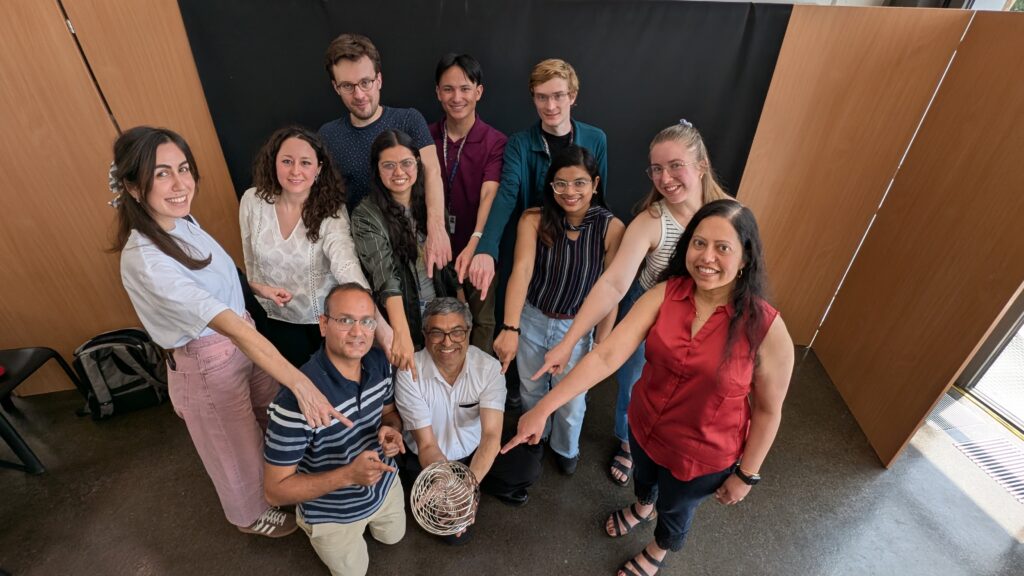

UW–Madison scientists part of team awarded Breakthrough Prize in Physics

A team of 13,508 scientists, including over 100 from the University of Wisconsin–Madison, won the 2025 Breakthrough Prize in Fundamental Physics, the Breakthrough Prize Foundation announced April 5. The Prize recognized work conducted at CERN’s Large Hadron Collider (LHC) between 2015 and 2024.

The Breakthrough Prize was created to celebrate the wonders of our scientific age. The $3 million prize will be donated to the CERN & Society Foundation, which offers financial support to doctoral students to conduct research at CERN.

Four LHC projects were awarded, including ATLAS and CMS, both of which UW–Madison scientists work on. ATLAS and CMS jointly announced the discovery of the Higgs boson in 2012, and its discovery opened up many new avenues of research. In the years since, LHC researchers have worked towards a better understanding of this important particle because it interacts with all matter and gives other particles their mass. Both teams are actively engaged in analyzing LHC data in search of exciting and new physics.

“The LHC experiments have produced more than 3000 combined papers covering studies of electroweak physics and the Higgs boson, searches for dark matter, understanding quantum chromodynamics, and studying the symmetries of fundamental physics,” says CMS researcher Kevin Black, chair of the UW–Madison department of physics. “This work represents the combined contributions of many thousands of physicists, engineers, and computer scientists, and has taken decades to come to fruition. We are all very excited to be recognized with this award.”

ATLAS and CMS have generally the same research goals, but different technical ways of addressing them. Both detectors probe the aftermath of particle collisions at the LHC and use the detectors’ high-precision measurements to address questions about the Standard Model of particle physics, the building blocks of matter and dark matter, exotic particles, extra dimensions, supersymmetry, and more.

The ATLAS team at UW–Madison has taken a leadership role in both physics analyses and computing. They have spearheaded precision measurements of the Higgs boson’s properties and conducted extensive searches for new physics, including Dark Matter, achieving major sensitivity gains through advanced AI and machine learning techniques. In addition to leading developments in computing infrastructure, the team has played a crucial role in the High-Level Trigger system and simulation efforts using generative AI, further enhancing the experiment’s capabilities.

The CMS team at UW–Madison has played and continues to play key roles in trigger electronics systems, which are ways of sorting through the tens of millions of megabytes of data produced each second by a collider experiment and retaining the most meaningful events. They also manage a large computing cluster at UW-Madison, contribute to the building and operating of muon detectors, make key contributions to CMS trigger and computing operations, and develop physics analysis techniques including AI/ML. The CMS group efforts are well recognized in the recently published compendium of results, dubbed, the Stairway to Heaven.

CMS and ATLAS research at UW–Madison is largely supported by the U.S. Department of Energy, with additional support from the National Science Foundation.

The following people had a UW–Madison affiliation during the time noted by the Prize:

Current Professors

Kevin Black, Tulika Bose, Kyle Cranmer, Sridhara Dasu, Matthew Herndon, Sau Lan Wu

Current PhD Physicists

Pieter Everaerts, Matthew Feickert, Camilla Galloni, Alexander Held, Wasikul Islam, Charis Koraka, Abdollah Mohammadi, Ajit Mohapatra, Laurent Pétré, Deborah Pinna, Jay Sandesara, Alexandre Savin, Varun Sharma, Werner Wiedenmann

Current Graduate Students

Anagha Aravind, Alkaid Cheng, He He, Abhishikth Mallampalli, Susmita Mondal, Ganesh Parida, Minh Tuan Pham, Dylan Teague, Abigail Warden

Current Engineering Staff

Shaojun Sun

Current Emeriti

Sunanda Banerjee (Senior Scientist), Richard Loveless (Distinguished Senior Scientist), Wesley H. Smith (Professor)

Alumni

Michalis Bachtis (Ph.D. 2012), Swagato Banerjee (Postdoc 2015), Austin Belknap (Ph.D. 2015), James Buchanan (Ph.D. 2019), Cecile Caillol (Postdoc), Duncan Carlsmith (Professor), Maria Cepeda (Postdoc), Jay Chan (Ph.D. 2023), Stephane Cooperstein (B.S. 2014), Isabelle De Bruyn (Scientist), Senka Djuric (Postdoc), Laura Dodd (Ph.D. 2018), Keegan Downham (B.S. 2020), Evan Friis (Postdoc), Bhawna Gomber (Postdoc), Lindsey Gray (Ph.D. 2012), Monika Grothe (Scientist), Wen Guan (Engineer with PhD 2022), Andrew Straiton Hard (Ph.D. 2018), Yang Heng (Ph.D. 2019), Usama Hussain (Ph.D. 2020), Haoshuang Ji (Ph.D. 2019), Xiangyang Ju (Ph.D. 2018), Laser Seymour Kaplan (Ph.D. 2019), Lashkar Kashif (Postdoc 2019), Pamela Klabbers (Scientist), Evan Koenig (BS 2018, Intern), Amanda Kaitlyn Kruse (Ph.D. 2015), Armando Lanaro (Senior Scientist), Jessica Leonard (Ph.D. 2011), Aaron Levine (Ph.D. 2016), Andrew Loeliger (Ph.D. 2022), Kenneth Long (Ph.D. 2019), Jithin Madhusudanan Sreekala (Ph.D. 2022) Yao Ming (Ph.D. 2018), Isobel Ojalvo (Ph.D. 2014, Postdoc), Lauren Melissa Osojnak (Ph.D. 2020), Tom Perry (Ph.D. 2016), Elois Petruska (BS, 2021), Yan Qian (Undergraduate Student 2023), Tyler Ruggles (Ph.D. 2018, Postdoc), Tapas Sarangi (Scientist), Victor Shang (Ph.D. 2024), Manuel Silva (Ph.D. 2019), Nick Smith (Ph.D. 2018), Amy Tee (Postdoc, 2023), Stephen Trembath-Reichert (M.S. 2020), Ho-Fung Tsoi (Ph.D. 2024), Devin Taylor (Ph.D. 2017), Wren Vetens (Ph.D. 2024), Alex Zeng Wang (Ph.D. 2023), Fuquan Wang (Ph.D. 2019), Nate Woods (Ph.D. 2017), Hongtao Yang (Ph.D. 2016), Fangzhou Zhang (Ph.D. 2018), Rui Zhang (Postdoc, 2025), Chen Zhou (Postdoc 2021)

UW–Madison joins new NSF-Simons AI Institute for the Sky

This post is modified from the original news story from Northwestern University

A large multi-institutional collaboration— led by Northwestern University and including UW–Madison physics professors Keith Bechtol, Kyle Cranmer, and Moritz Münchmeyer — has received a $20 million grant to develop and apply new artificial intelligence (AI) tools to astrophysics research and deep space exploration.

Jointly funded by the National Science Foundation (NSF) and the Simons Foundation, the highly competitive grant will establish the NSF-Simons AI Institute for the Sky (SkAI, pronounced “sky”). SkAI is one of two National AI Research Institutes in Astronomy announced today. Northwestern astrophysicist Vicky Kalogera is principal investigator of the grant and will serve as the director of SkAI. Northwestern AI expert Aggelos Katsaggelos is a co-principal investigator of the grant.

The new institute will unite multidisciplinary researchers to develop innovative, trustworthy AI tools for astronomy, which will be used to pursue breakthrough discoveries by analyzing large astronomy datasets, transform physics-based simulations and more. With unprecedentedly large sky surveys poised to launch, including from the Vera C. Rubin Observatory in Chile, astronomers will require smarter, more efficient tools to accelerate the mining and interpretation of increasingly large datasets. SkAI will fulfill a crucial role in developing and refining these tools.

Through machine learning maps, cosmic history comes into focus

By Jason Daley, UW–Madison College of Engineering

For millennia, humans have used optical telescopes, radio telescopes and space telescopes to get a better view of the heavens.

Today, however, one of the most powerful tools for understanding the cosmos is the computer chip: Cosmologists rely on processing power to analyze astronomical data and create detailed simulations of cosmic evolution, galaxy formation and other far-out phenomena. These powerful simulations are starting to answer fundamental questions of how the universe began, what it is made of and where it’s likely headed.

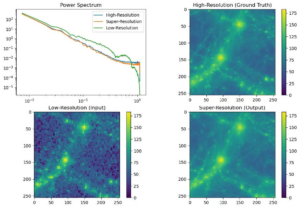

“It is extremely expensive to run these simulations and basically takes forever,” says Kangwook Lee, an assistant professor of electrical and computer engineering at the University of Wisconsin-Madison. “So they cannot run them for large-scale simulations or for high-resolution at that same time. There are a lot of issues coming from that.”

Instead, machine learning expert Lee and physics colleagues Moritz Münchmeyer and Gary Shiu are using emerging artificial intelligence techniques to speed up the process and get a clearer view of the cosmos.

Machine Learning meets Physics

Machine learning and artificial intelligence are certainly not new to physics research — physicists have been using and improving these techniques for several decades.

In the last few years, though, machine learning has been having a bit of an explosion in physics, which makes it a perfect topic on which to collaborate within the department, the university, and even across the world.

“In the last five years in my field, cosmology, if you look at how many papers are posted, it went from practically zero to one per day or so,” says assistant professor Moritz Münchmeyer. “It’s a very, very active field, but it’s still in an early stage: There are almost no success stories of using machine learning on real data in cosmology.”

Münchmeyer, who joined the department in January, arrived at a good time. Professor Gary Shiu was a driving force in starting the virtual seminar series “Physics ∩ ML” early in the pandemic, which now has thousands of people on the mailing list and hundreds attending the weekly or bi-weekly seminars by Zoom. As it turned out, physicists across fields were eager to apply their methods to the study of machine learning techniques.

“So it was natural in the physics department to organize the people who work on machine learning and bring them together to exchange ideas, to learn from each other, and to get inspired,” Münchmeyer says. “Gary and I decided to start an initiative here to more efficiently focus department activities in machine learning.”

Currently, that initiative includes Münchmeyer, Shiu, Tulika Bose, Sridhara Dasu, Matthew Herndon, and Pupa Gilbert, and their research group members. They watch the Physics ∩ ML seminar together, then discuss it afterwards. On weeks that the virtual seminar is not scheduled, the group hosts a local speaker — from physics or elsewhere on campus — who is doing work in the realm of machine learning.

In the next few years, the Machine Learning group in physics looks to build on the momentum the field currently has. For example, they hope to secure funding to hire postdoctoral fellows who can work within a group or across groups in the department. Also, the hiring of Kyle Cranmer — one of the best-known researchers in machine learning for physics — as Director of the American Family Data Science Institute and as a physics faculty member, will immediately connect machine learning activities in this department with those in computer sciences, statistics, and the Information School, as well other areas on campus.

“There are many people [on campus] actively working on machine learning for the physical sciences, but there was not a lot of communication so far, and we are trying to change that,” Münchmeyer says.

Machine Learning Initiatives in the Department (so far!)

Kevin Black, Tulika Bose, Sridhara Dasu, Matthew Herndon and the CMS collaboration at CERN use machine learning techniques to improve the sensitivity of new physics searches and increase the accuracy of measurements.

Pupa Gilbert uses machine learning to understand patterns in nanocrystal orientations (detected with her synchrotron methods) and fracture mechanics (detected at the atomic scale with molecular dynamics methods developed by her collaborator at MIT).

Moritz Münchmeyer develops machine learning techniques to extract information about fundamental physics from the massive amount of complicated data of current and upcoming cosmological surveys.

Gary Shiu develops data science methods to tackle computationally complex systems in cosmology, string theory, particle physics, and statistical mechanics. His work suggests that Topological Data Analysis (TDA) can be integrated into machine learning approaches to make AI interpretable — a necessity for learning physical laws from complex, high dimensional data.