This story is adapted from the HAWC Collaboration press release. Microquasars—compact regions surrounding a black hole with a mass several times that of its companion star—have long been recognized as powerful particle accelerators within our galaxy. The enormous jets spewing out of microquasars are thought to play an important role in the production of galactic cosmic rays, although [...]

Read the full article at: https://wipac.wisc.edu/hawc-detection-of-an-ultra-high-energy-gamma-ray-bubble-around-a-microquasar/Research

First plasma marks major milestone in UW–Madison fusion energy research

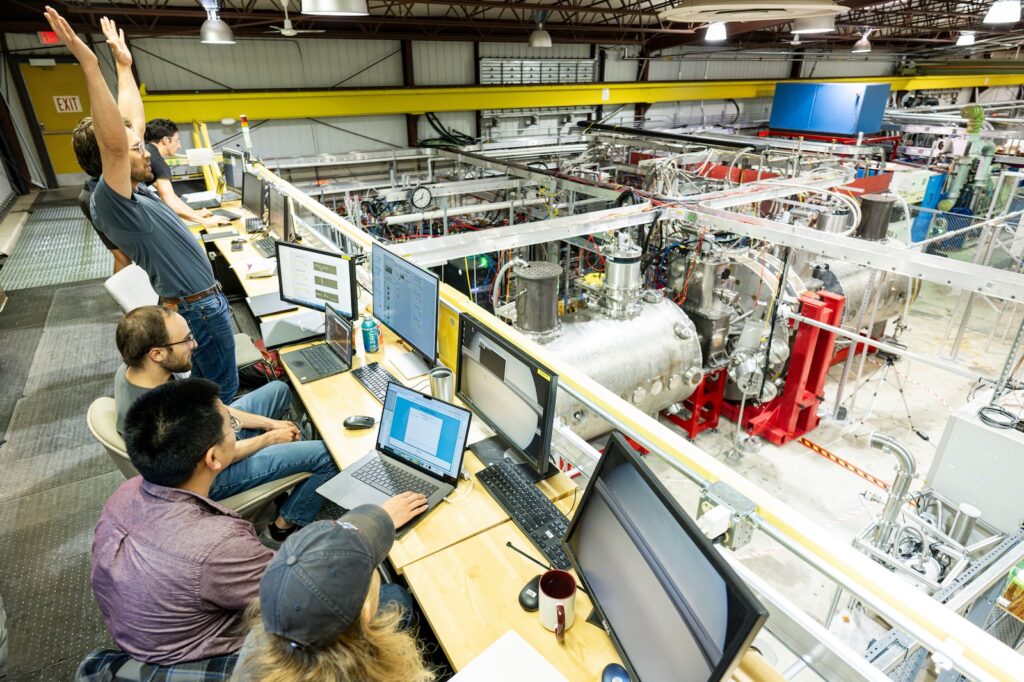

A fusion device at the University of Wisconsin–Madison generated plasma for the first time Monday, opening a door to making the highly anticipated, carbon-free energy source a reality.

Over the past four years, a team of UW–Madison physicists and engineers has been constructing and testing the fusion energy device, known as WHAM (Wisconsin HTS Axisymmetric Mirror) in UW’s Physical Sciences Lab in Stoughton. It transitioned to operations mode this week, marking a major milestone for the yearslong research project that’s received support from the U.S. Department of Energy.

“The outlook for decarbonizing our energy sector is just much higher with fusion than anything else,” says Cary Forest, a UW–Madison physics professor who has helped lead the development of WHAM. “First plasma is a crucial first step for us in that direction.”

WHAM started in 2020 as a partnership between UW–Madison, MIT and the company Commonwealth Fusion Systems. Now, WHAM will operate as a public-private partnership between UW–Madison and spinoff company Realta Fusion Inc., positioning it as major force for fusion research advances at the university.

The largest magnetic fields in galaxy clusters have been revealed for the first time

By Alex Lazarian, Yue Hu, and Ka Wai Ho

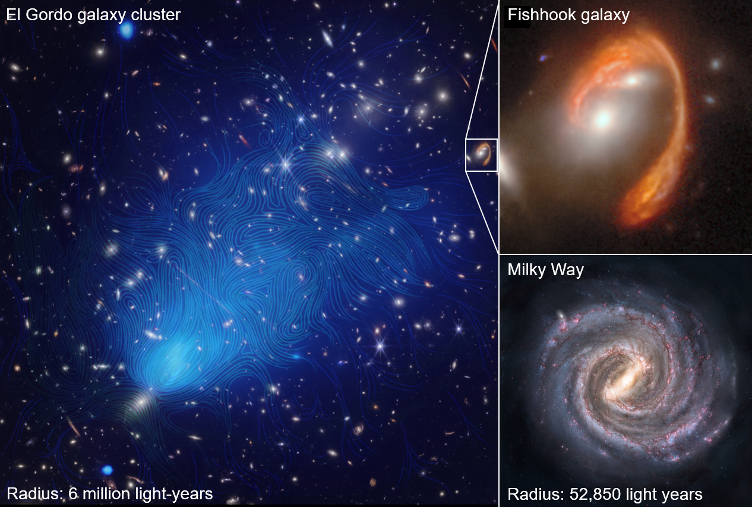

Galaxy clusters, immense assemblies of galaxies, gas, and elusive dark matter, form the cornerstone of our Universe’s grandest structure — the cosmic web. These clusters are not just gravitational anchors, but dynamic realms profoundly influenced by magnetism. The magnetic fields within these clusters are pivotal, shaping the evolution of these cosmic giants. They orchestrate the flow of matter and energy, directing accretion and thermal flows, and are vital in accelerating and confining high-energy charged particles/cosmic rays.

However, mapping the magnetic fields on the scale of galaxy clusters posed a formidable challenge. The vast distances and complex interactions with magnetized and turbulent plasmas diminish the polarization signal, a traditionally used informant of magnetic fields. Here, the groundbreaking technique — synchrotron intensity gradients (SIG) — developed by a team of UW–Madison astronomers and physicists led by astronomy professor Alexandre Lazarian, marks a turning point. They shifted the focus from polarization to the spatial variations in synchrotron intensity. This innovative approach peels back layers of cosmic mystery, offering a new way to observe and comprehend the all-important magnetic tapestry on scale of millions of light years.

A landmark study published in Nature Communications has employed the SIG technique to unveil the enigmatic magnetic fields within five colossal galaxy clusters, including the monumental El Gordo cluster, observed with the Very Large Array (VLA) and MeerKAT telescope. This colossal cluster, formed 6.5 billion years ago, represents a significant portion of cosmic history, dating back to nearly half the current age of the universe. The findings in El Gordo, characterized by the largest magnetic fields observed, provide crucial insights into the structure and evolution of galaxy clusters.

The research is a fruitful collaboration between the UW–Madison team and their Italian colleagues, including Gianfranco Brunetti, Annalisa Bonafede, and Chiara Stuardi from the Instituto do Radioastronomia (Bologna, Italy) and the University of Bologna. Brunetti, a renowned expert in the high-energy physics of galaxy clusters, is enthusiastic about the potential that the SIG technique holds for exploring magnetic field structures on even larger scales, such as the Megahalos recently discovered by him and his colleagues.

Echoing this excitement is the study’s lead researcher, physics graduate student Yue Hu.

“This research marks a significant milestone in astrophysics,” Hu says. “Utilizing the SIG method, we’ve observed and begun to comprehend the nature of magnetic fields in galaxy clusters for the first time. This breakthrough heralds new possibilities in our quest to unravel the mysteries of the universe.”

This study lays the groundwork for future explorations. With the SIG method’s proven effectiveness, scientists are optimistic about its application to even larger cosmic structures that have been detected recently with the Square Kilometre Array (SKA), promising deeper insights into the mysteries of the Universe magnetism and its effects on the evolution of the Universe Large Scale Structure.

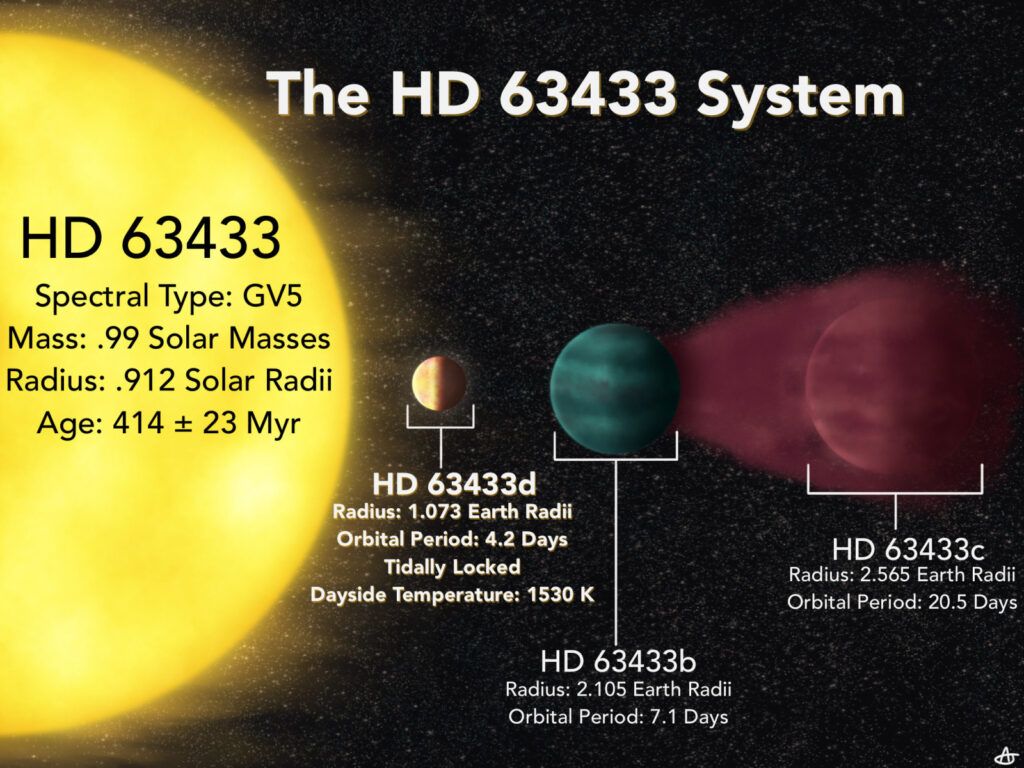

Earth-sized planet discovered in ‘our solar backyard’

A team of astronomers have discovered a planet closer and younger than any other Earth-sized world yet identified. It’s a remarkably hot world whose proximity to our own planet and to a star like our sun mark it as a unique opportunity to study how planets evolve.

The new planet was described in a new study published this week by The Astronomical Journal. Melinda Soares-Furtado, a NASA Hubble Fellow at the University of Wisconsin–Madison who will begin work as an astronomy and physics professor at the university in the fall, and recent UW–Madison graduate Benjamin Capistrant, now a graduate student at the University of Florida, co-led the study with co-authors from around the world.

“It’s a useful planet because it may be like an early Earth,” says Soares-Furtado.

A new spin on an old superconductor means that it can be an ideal spintronic material, too

Back in the 1980s, researchers discovered that a bismuthate oxide material was a rare type of superconductor that could operate at higher temperatures. Now, a team of engineers and physicists at the University of Wisconsin-Madison has found the material, “Ba(Pb,Bi)O3,” is unique in another way: It exhibits extremely high spin orbit torque, a property useful in the emerging field of spintronics.

The combination makes this and similar materials potentially important in developing the next generation of fast, efficient memory and computing devices.

The finding was an encouraging surprise to Chang Beom-Eom, a professor of materials science and engineering, and Mark Rzchowski, a professor of physics, both at UW-Madison. “We’re looking to expand the range of materials that can be used in spintronic applications,” says Rzchowski. “We had known from previous work these oxides have a lot of interesting properties, and so were investigating the spintronic characteristics. We weren’t anticipating such a large effect. The origins of this are not theoretically understood, but we can speculate about some interesting physical mechanisms.”

The paper was published Dec. 5, 2023, in the journal Nature Electronics.

In conventional electronics, positive and negative electric charges are used to flip millions or billions of tiny transistors on semiconductor chips or in memory devices. But in spintronics, magnetic fields, and interactions with other electrons, manipulate a fundamental property of electrons called the spin state, which records information. This is much faster, more energy-efficient and more powerful than current semiconductors and will advance the development of quantum computing and low-power devices.

Featured image caption: Chang Beom-Eom, a professor of materials science and engineering, and Mark Rzchowski, a professor of physics, in the lab. Photo: Joel Hallberg.

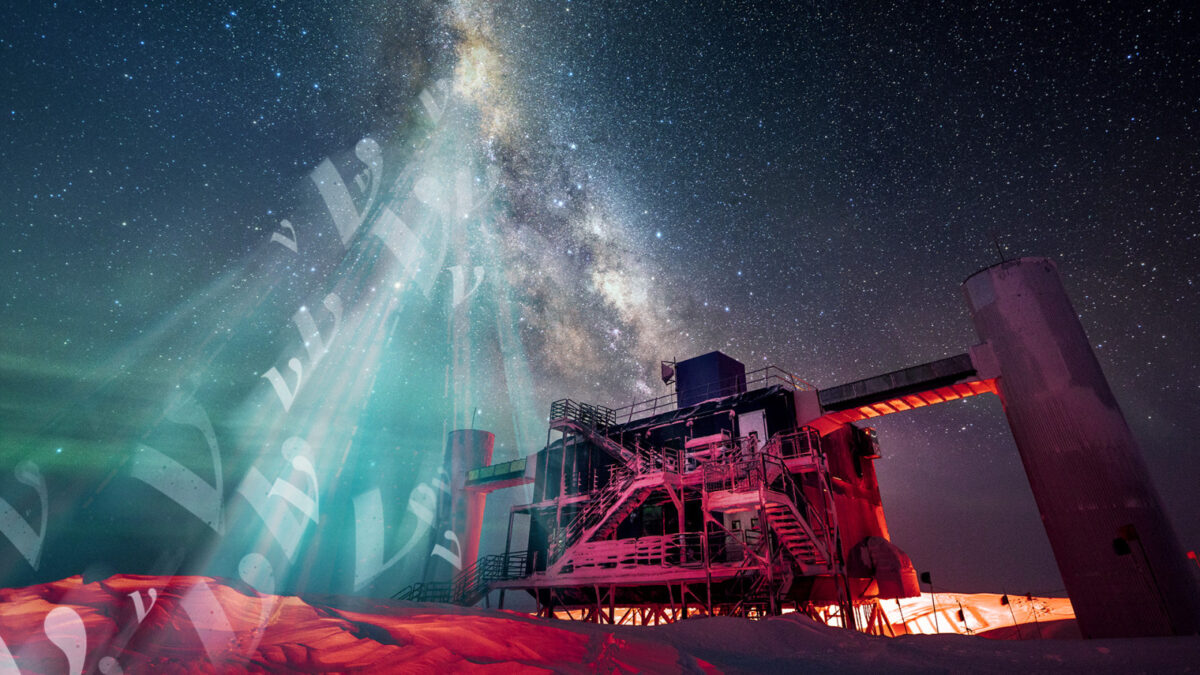

IceCube shows Milky Way galaxy is a neutrino desert

The Milky Way galaxy is an awe-inspiring feature of the night sky, dominating all wavelengths of light and viewable with the naked eye as a hazy band of stars stretching from horizon to horizon. Now,

In a June 30 article in the journal Science, the IceCube Collaboration — an international group of more than 350 scientists — presents this new evidence of high-energy neutrino emission from the Milky Way. The findings indicate that the Milky Way produces far fewer neutrinos than the average distant galaxies.

“What’s intriguing is that, unlike the case for light of any wavelength, in neutrinos, the universe outshines the nearby sources in our own galaxy,” says Francis Halzen, a professor of physics at the University of Wisconsin–Madison and principal investigator at IceCube.

The IceCube search focused on the southern sky, where the bulk of neutrino emission from the galactic plane is expected near the center of the galaxy. However, until now, a background of neutrinos and other particles produced by cosmic-ray interactions with the Earth’s atmosphere made it difficult to parse out neutrinos originating from galactic sources — a significant challenge compounded by relatively sparse neutrino production in general.

Two students receive Sophomore Research Fellowships

Two physics majors have received a UW–Madison Sophomore Research Fellowship as a fellow or honorable mention. The students are:

- Erica Magee, Mathematics, Physics; working with Martin Zanni (Chemistry)

- Elias Mettner, Physics; working with Abdollah Mohammadi (Physics)

The fellowships include a stipend to each student and to their faculty advisers. Fellowships are funded by grants from the Brittingham Wisconsin Trust and the Kemper K. Knapp Bequest, with additional support from UW-System, the Chancellor’s Office and the Provost’s Office.

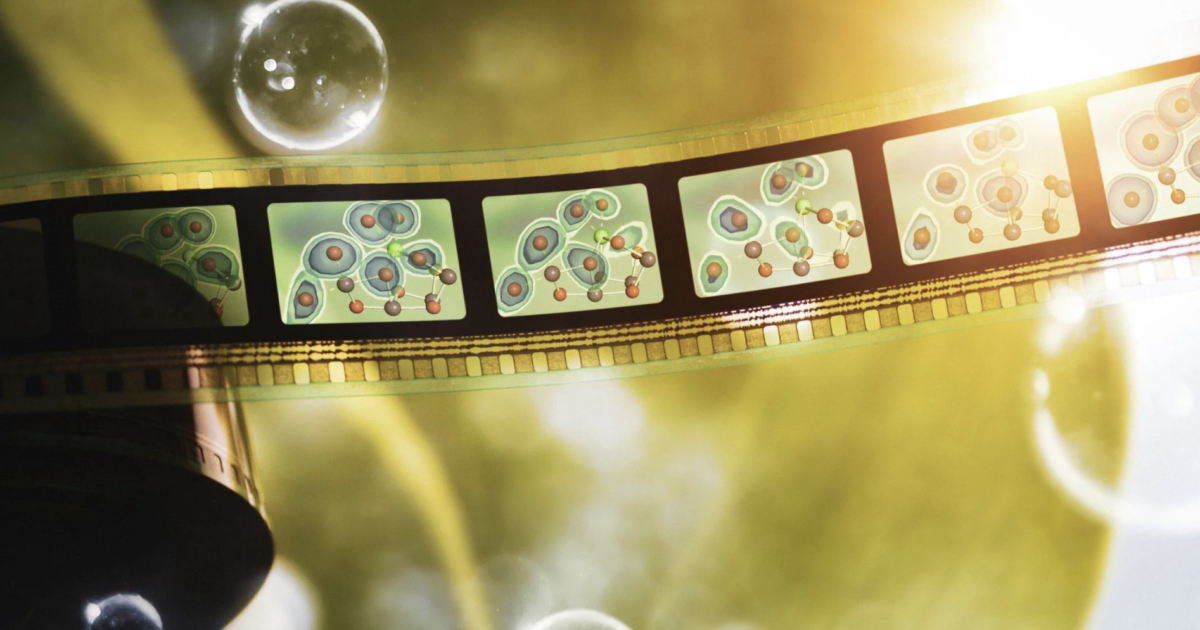

Researchers capture elusive missing step in the final act of photosynthesis

This story was modified from one originally published by SLAC

Photosynthesis plays a crucial role in shaping and sustaining life on Earth, yet many aspects of the process remain a mystery. One such mystery is how Photosystem II, a protein complex in plants, algae and cyanobacteria, harvests energy from sunlight and uses it to split water, producing the oxygen we breathe. Now researchers from the Department of Energy’s Lawrence Berkeley National Laboratory and SLAC National Accelerator Laboratory, together with collaborators from the University of Wisconsin–Madison and other institutions have succeeded in cracking a key secret of Photosystem II.

Using SLAC’s Linac Coherent Light Source (LCLS) and the SPring-8 Angstrom Compact free electron LAser (SACLA) in Japan, they captured for the first time in atomic detail what happens in the final moments leading up to the release of breathable oxygen. The data reveal an intermediate reaction step that had not been observed before.

The results, published today in Nature, shed light on how nature has optimized photosynthesis and are helping scientists develop artificial photosynthetic systems that mimic photosynthesis to harvest natural sunlight to convert carbon dioxide into hydrogen and carbon-based fuels.

“The splitting of water to molecular oxygen by photosynthesis has dramatically reshaped our early planet, eventually leading to complex life forms that rely on oxygen for respiration, including ourselves,” says Uwe Bergmann, a physics professor at UW–Madison. “Capturing the final steps of this process in real time with x-ray laser pulses, and bringing to light the individual atoms involved, is thrilling and adds an important piece to solving this over 3-billion-year-old puzzle.”

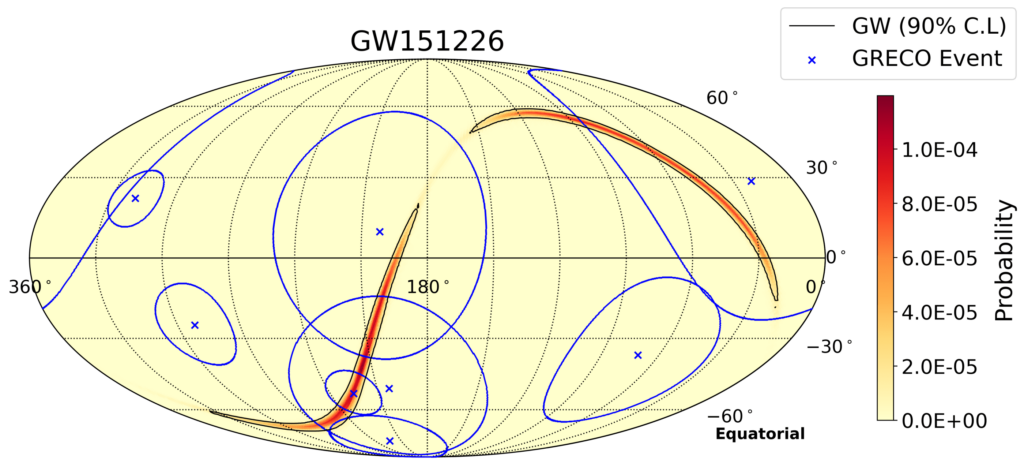

IceCube search for sub-TeV neutrino emission associated with LIGO/Virgo gravitational waves

Gravitational waves (GWs) are produced by some of the most extreme astrophysical phenomena, such as black hole and neutron star mergers. They have long been suspected as astrophysical sources of neutrinos, ghostlike cosmic messengers hurtling through space unimpeded. Thus far, common astrophysical sources of neutrinos and photons, as well as common sources of gravitational waves and light, have been identified. However, no one has yet detected sources that emit both gravitational waves and neutrinos.

In a study recently submitted to The Astrophysical Journal, the IceCube Collaboration performed a new search for neutrinos from GWs at the GeV-TeV scale. Although no evidence of neutrino emission was found, new upper limits on the number of neutrinos associated with each gravitational wave source and on the total energy emitted by neutrinos for each source were set.

Previously, IceCube searched for neutrinos from GW sources using the TeV-PeV neutrinos detected by the main IceCube Neutrino Observatory, a cubic-kilometer detector enveloped in Antarctic ice at the South Pole. This time, collaborators used data taken with the DeepCore array, the innermost component of IceCube consisting of sensors more densely spaced than in the main array. DeepCore can detect lower energy (GeV and upward) neutrinos than is possible with the larger main array.

The analysis looked for temporal and spatial correlations between 90 GW events detected by the Laser Interferometer Gravitational-Wave Observatory (LIGO) and the Virgo gravitational wave detectors and neutrinos detected by DeepCore. The researchers found no significant excess of neutrinos from the direction of the GW events but set stringent upper limits on the neutrino flux and limits on the energies associated with neutrinos from each GW source.

“These results do not mean that all hope is lost for detecting such joint emissions,” says Aswathi Balagopal V., a postdoctoral associate at UW–Madison and co-lead of the analysis. “With improvements in directional reconstructions for low-energy neutrinos, which is expected with better methods and with the inclusion of the IceCube Upgrade, we will be able to achieve better sensitivities for such joint searches, potentially leading to a positive discovery.”

UW–Madison researchers key in search for neutrino emission from the brightest gamma-ray burst ever detected

This story was originally published by WIPAC

On October 9th, 2022, an unusually bright pulse of high-energy radiation whizzed past Earth, captivating astronomers around the world. The luminous emission came from a gamma-ray burst (GRB), one of the most powerful classes of explosions in the universe. Named GRB 221009A, it triggered detectors at NASA’s Gamma-ray Burst Monitor and Large Area Telescope (both on board the Fermi Gamma-ray Space Telescope), the Neil Gehrels Swift Observatory, and the Wind spacecraft as well as other telescopes that quickly turned to the GRB site to study its aftermath.

This record-shattering GRB is one of the closest and the brightest GRB ever spotted, earning it the nickname BOAT (“brightest of all time”). This GRB is believed to come from an exploding star and likely signals the birth of a black hole.

In a new study by the IceCube Collaboration, published today in The Astrophysical Journal Letters, UW–Madison researchers presented results of one of five searches for neutrino emission from GRB 221009A that leveraged the full detector range, covering nine orders of magnitude in energy. Because no significant emission was found across samples spanning 10 MeV to 10 PeV, the results are the most stringent constraints on neutrino emission from GRBs.

As some of the most energetic sources in the universe, GRBs have long been considered a possible astrophysical source of neutrinos—tiny “ghostlike” particles that travel through space and large amounts of matter unhindered. These high-energy neutrinos are of particular interest to the National Science Foundation-supported IceCube Neutrino Observatory, a gigaton-scale neutrino detector at the South Pole.

IceCube is run by the international IceCube Collaboration, which comprises over 350 scientists from 58 institutions around the world. The Wisconsin IceCube Particle Astrophysics Center (WIPAC), a research center at UW–Madison, is the lead institution for the IceCube project.

Previously, IceCube has performed searches for neutrino emission from GRBs, but thus far, a correlation has not been found between high-energy neutrinos and GRBs. The recent observation of GRB 221009A presented IceCube with the best opportunity yet to search for neutrino emission by GRBs.

“Not only was this GRB the brightest ever detected in gamma rays, it also occurred in a region of the sky where IceCube is very sensitive,” says UW–Madison physics professor Justin Vandenbroucke, who helped lead the analysis.

For the study, collaborators carried out five complementary IceCube analyses that encompassed the full energy range of the detector. Each analysis targeted a specific energy range, with the idea of covering as wide an energy range as possible. UW–Madison physics PhD student Jessie Thwaites was one of the main analyzers.

Thwaites performed a “fast response” analysis based on real-time data from the South Pole to search for high-energy (0.10 teraelectronvolts to 10 petaelectronvolts) neutrinos from the direction of the GRB. They chose two time windows: one three-hour window covering all of the triggers reported in real time, and one covering two days. Their analysis, which set strong constraints on neutrino emission from GRBs, was quickly reported to the community, within hours of the GRB being detected by the gamma-ray satellites.

“In the high energies, our upper limits are very constraining—they are below the observations from gamma-ray telescopes,” says Thwaites. “These upper limits, combined with the observations from many electromagnetic telescopes, give us more information about GRBs as potential particle accelerators.”

Because this GRB is so bright, and because it has been so well studied, IceCube is able to place constraining upper limits on neutrino emission models proposed for this specific GRB. These constraints will enable better understanding of how GRBs work.

The collaborators are already developing new methods to improve searches for neutrinos from GRBs and other transient astrophysical sources. In addition, future upgrades and proposed extensions of IceCube, including the IceCube Upgrade project and IceCube-Gen2, could be the key to finding high-energy neutrino emission from GRBs or other transients.

According to Vandenbroucke, “This GRB illustrates the capabilities of IceCube for real-time follow-up of astrophysical transients. IceCube views the entire sky, all the time, over a factor of a billion in energy range. There is likely a burst of neutrinos already flying towards us from some other cosmic source, and we are ready for it.”

+ info “Limits on Neutrino Emission from GRB 221009A from MeV to PeV using the IceCube Neutrino Observatory,” The IceCube Collaboration: R. Abbasi et al. Published in The Astrophysical Journal Letters. arxiv.org/abs/2302.05459