Graduate Students

In search of new particles like the Higgs Boson

Could there be more particles like the Higgs boson? For the first time, the CMS experiment has searched for the decay of the Higgs boson into two more Higgs-boson-like particles with unequal masses.

Written by: Ashling Quinn ’23 and Anagha Aravind (physics PhD student), originally published by the CMS Collaboration

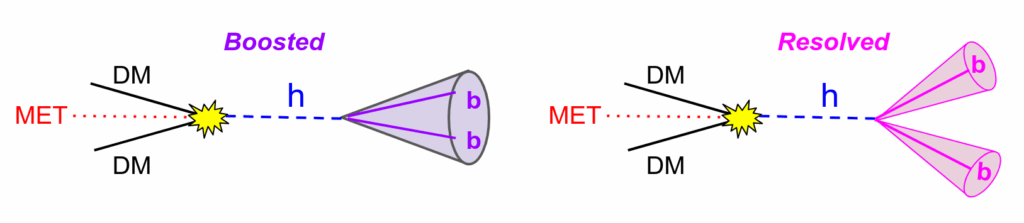

Some theories suggest that the Higgs boson might occasionally decay into particles that have never been seen before and have Higgs-boson-like properties. These new particles are unstable and quickly decay to known Standard Model particles in the CMS detector. While past CMS results have explored scenarios where the Higgs boson decays to such short-lived particles of identical masses, in this study we searched for a new possibility: what if the Higgs boson decays into two different new particles instead of two identical ones?

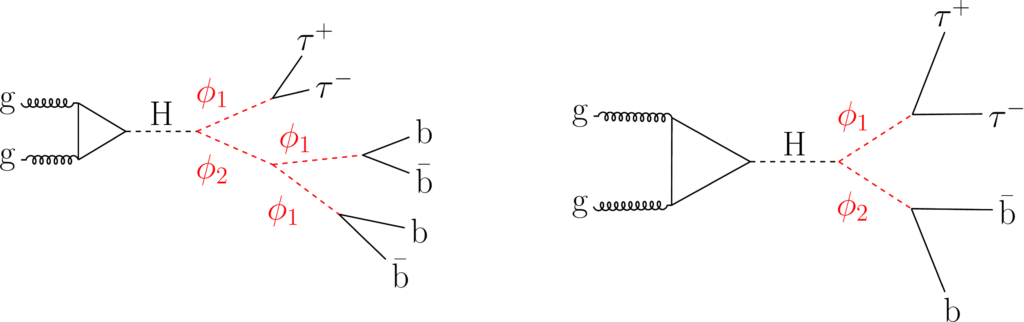

Calling the new particles ɸ1 and ϕ2 (ϕ2 is the heavier one), we consider cases where one of the ɸ decays to two bottom quarks, and the other decays to two 𝜏 leptons. This final state is favourable, since it has a relatively large probability of occurring and can be used to select interesting signal-like events from our datasets.

If the ϕ2 particle is at least twice as heavy as ϕ1, it could decay into an intermediate state with two ϕ1 before these decay into Standard Model particles. “We call this ‘cascade’ decay,” says Ashling Quinn, a PhD student working on the analysis, “since the extra step makes it resemble a waterfall.” So the decays can look like: H→ ɸ1ϕ2 → 2𝜏2b (non-cascade) or H→ ɸ1ϕ2 → 2𝜏4b (cascade). These are shown in the figure below.

The strategy of this search is to reconstruct the decay of the ɸ1 boson into two 𝜏 leptons and to obtain the ɸ1 mass distribution. The presence of the ɸ1 signal is expected to appear as a peak on top of a flat background distribution.

To enhance the separation between signal and background events, we trained a machine learning model with several kinematic distributions as input. Another PhD student, Anagha Aravind, describes how this works: “Since the ɸ bosons have relatively low mass, their final state will be collimated in a narrow cone. The machine learning model exploits this feature, along with other subtle differences, to classify events as either signal or background.”

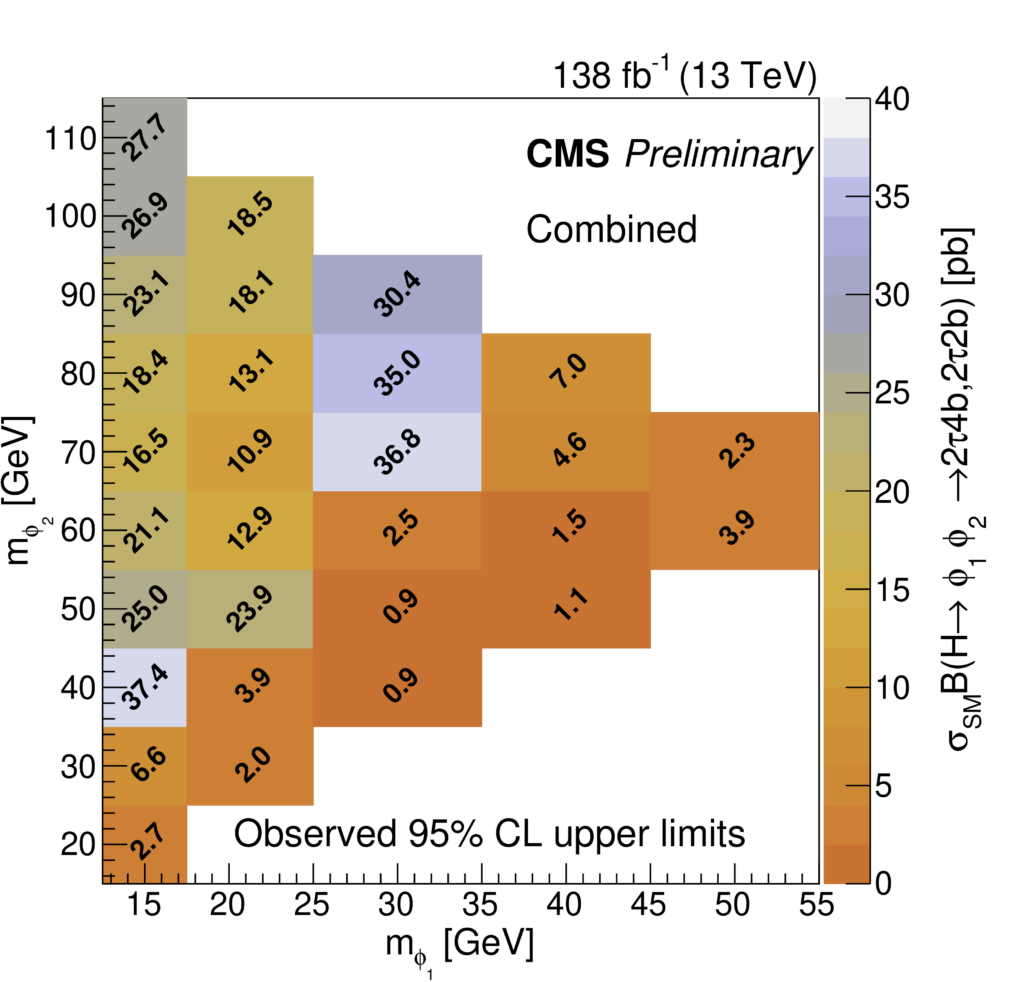

No significant excess of events was observed in the mass distribution. Upper limits were extracted on the rates – or “cross section” – of the considered processes for a range of ɸ1, ϕ2 boson masses. These results provide valuable constraints on theoretical models predicting such signatures and help guide future theoretical and experimental efforts.

This was the first search within the CMS Collaboration for Higgs boson decays into two Higgs-boson-like particles with unequal masses. The results pave the way for a promising future: the dominant source of uncertainty was statistical, which means more data from Run 3 and the High-Luminosity LHC will improve the sensitivity. If we think of ourselves as detectives hunting for new particles, more data means more clues to solve the mystery.

Sam Kramer, Benjamin Beyer named L&S Teaching Mentors

This post is adapted from the L&S teaching mentor website

L&S announced their 2026 Teaching Mentors, including physics PhD students Sam Kramer and Benjamin Beyer. Kramer earned the additional honor of being named a Lead Teaching Mentor.

The L&S Teaching Mentors are the heart of our college level Teaching Assistant (TA) Trainings. They are exceptionally passionate and knowledgeable teachers with proven track records for teaching excellence who work closely with the L&S TA Training and Support Team to facilitate various trainings and mentor L&S TAs. Each Teaching Mentor is chosen through a competitive selection process for their enthusiasm and capacity to help others develop as effective and equitable teachers. They not only serve as role models, but also as sources of support and knowledge for both new and returning TAs.

Lead Teaching Mentors have served as Teaching Mentors more than once and take on an additional leadership role within the program. They support first-time Teaching Mentors as they learn to facilitate the TA Training curriculum. They also work with L&S TA Training and Support Team leadership to strengthen program offerings. In short, they are an invaluable source of expertise, creativity, and serve as deeply valued collaborators.

Sam is a fourth-year Ph.D. candidate in the Department of Physics and has been teaching for Physics 202, a course for engineering major undergraduates that focuses on electricity, magnetism, and optics, since arriving in Madison. Sam also taught for a similar course as an undergraduate at Saint Louis University. In this role, he leads both discussions, which focus on problem solving, and labs, which provide hands-on experience with the concepts being taught. Physics can be an overwhelming subject, so Sam tries to distill the material into manageable chunks for the students, emphasizing the broader concepts underlying the formulas students use and drawing explicit connections between parts of the curricula. This is meant to develop the dynamic problem solving skills students need when encountering problems they have not seen before.

As an undergraduate, Benjamin began teaching introductory courses in physics. Since matriculating as a graduate student in the Department of Physics, Benjamin has continued to teach a wide range of courses, from courses emphasizing experimental laboratory skills to courses with a theoretical flavor. His approach blends connecting with students with breaking down complicated subjects, such that students can connect with the material in the context of their own experiences. He believes that learning physics is just as much about learning how to troubleshoot and make mistakes safely as it is about getting the right answer. Ultimately, his favorite part of teaching is helping to take the intimidation factor out of physics and watching students gain confidence in their own abilities.

Congrats, Wisconsin Space Grant Consortium award winners!

The Wisconsin Space Grant Consortium (WSGC) recently announced its 2026 Spring Awardees, including several UW–Madison physics students. WSGC award winners are Wisconsin students, educators, faculty, and research teams conducting NASA-aligned research, STEM education, and aerospace outreach across the state. These awards strengthen Wisconsin’s STEM workforce pipeline through hands-on research, outreach programming, academic advancement, and national aerospace collaborations.

Physics graduate student winners, who all won the WSGC Graduate and Professional Research Fellowship Award, include:

- Robin Chisolm

- Zachary Curtis-Ginsberg

- Maggie Ju

- Alicia Mand

- Julia Sheffler

- Faizah Siddique

- Perri Zilberman

Physics undergraduate winners (and their department research group, if applicable), include:

- Natalie Broderick (Zweibel group), Undergraduate Research Award

- Henry DePew (McCammon group), Undergraduate Scholarship Award

Other undergraduates conducting research in physics department labs include:

- Annelise Alvin (Soares-Furtado group), Undergraduate Scholarship Award

- Anna Castello (Bechtol group), Undergraduate Scholarship Award

- Chris Pate (Timbie group), Undergraduate Research Award

Through the 2026 Spring Awards, WSGC:

- Funds 59 competitive awards at 12 institutions statewide

- Invests $341,951 in scholarships, internships, outreach, faculty initiatives, and research

- Expands aerospace outreach through educators, nonprofit partners, and community programming

- Supports undergraduate, graduate, and faculty research directly aligned with NASA priorities

- Enables students to participate in NASA internships and industry workforce development experiences

- Builds capacity for future aerospace and STEM professionals in Wisconsin

Lekshmi Thulasidharan earns campus TA award

This post is modified from one posted by the Graduate School

Thirty-two exceptional graduate students, including physics PhD student Lekshmi Thulasidharan, have been selected as recipients of the 2025-26 Campus-Wide Teaching Assistant Awards, recognizing their strengths and commitment surrounding the craft of teaching.

UW–Madison employs over 2,400 teaching assistants (TAs) across a wide range of disciplines. Their contributions to the classroom, lab, and field are essential to the university’s educational mission. To recognize the excellence of TAs across campus, the Graduate School, the College of Letters & Science, and the Morgridge Center sponsor these annual awards.

Volunteer judges selected awardees for four categories: early excellence, advanced achievement, capstone teaching, and community-based learning.

Thulasidharan earned both a Capstone Teaching Award and a Dorothy Powelson Award. The Capstone Teaching Award recognizes dissertators at the end of their graduate program with an outstanding teaching record over the course of their UW–Madison tenure. The Dorothy Powelson Awards recognize outstanding performance by TAs in the natural sciences.

Thulasidharan is a student in astronomy professor and physics affiliate professor Elena D’Onghia’s group. Her research focus is on galactic dynamics. She has taught quite a few courses during her years at UW–Madison, with her favorite being Modern Physics. She has also really enjoyed teaching the physics course about Mechanics.

As a teacher, her favorite thing is working closely with students as they learn to tackle difficult physics problems.

“Many students start out feeling intimidated by the material, but through discussions and guided problem-solving sessions they begin to see the logic behind it and grow more confident. Watching that growth over the semester is the most rewarding part of teaching,” she said. “Over the years, teaching has also helped me grow as a person. It has helped me develop confidence and strengthened my communication and mentoring skills.”

Dark Energy Survey scientists release new analysis of how the universe expands

The latest results combined weak lensing and galaxy clustering and incorporated four dark energy probes from a single experiment for the first time.

This story is amended from one published by Fermilab, which includes information about the full results published by the DES collaboration

The Dark Energy Survey (DES) collaboration — including scientists at the University of Wisconsin–Madison — is releasing results that, for the first time, combine all six years of data from weak lensing and galaxy clustering probes. In the paper, which represents a summary of 18 supporting papers, they also present their first results found by combining all four probes — baryon acoustic oscillations (BAO), type-Ia supernovae, galaxy clusters, and weak gravitational lensing — as proposed at the inception of DES 25 years ago.

“We combined multiple approaches to measure dark energy from a single dataset into a summative result,” says Keith Bechtol, physics professor at UW–Madison and DES collaboration scientist. “More than one hundred people have been working on these results for over a decade, and our group is one of many who contributed.”

The analysis yielded new, tighter constraints that narrow down the possible models for how the universe behaves. These constraints are more than twice as strong as those from past DES analyses, while remaining consistent with previous DES results.

“The constraints have gotten tighter and, so far, are consistent with the cosmological model that has withstood ever more stringent tests during past two decades,” Bechtol says. “The results sharpen the mysteries surrounding the detailed physics that would explain dark energy and dark matter.”

UW–Madison contributions

Bechtol, former physics graduate student Megan Tabbutt, and current graduate student Julián Beas-González all contributed to the current results, developing methods to ensure the data products were scientifically validated. Current Bechtol group postdoc Jason Lee worked on the type Ia supernovae analysis as a graduate student at the University of Pennsylvania.

Bechtol was involved with data collection and curation for DES — a dataset that amounted to over 75,000 individual images with almost 700 million individual stars and galaxies.

“I helped coordinate the effort to assemble, scientifically validate, and document the data products that served as the foundation of the cosmology results presented today,” Bechtol says.

Tabbutt developed a software pipeline to provide detailed characterization for the detection and measurement of stars and galaxies, and Beas-González further refined that pipeline and conducted the final analysis. The analysis used a method known as synthetic source injection, where synthetic stars and galaxies with known properties are inserted into actual night sky images, and the augmented images are consistently re-processed through the regular measurement pipeline.

“From these measurements, we can compare what we measured versus what we injected. It’s a way to translate between the things we measure and the things that are supposed to be out there in the night sky,” Beas-González says. “It can be used as both a diagnostic tool to see how well we’re detecting and measuring things, but it also has other downstream applications.”

These downstream applications, including calibrating photometric redshifts and obtaining magnification estimates of gravitational weak lensing, are also important to DES collaboration work. Redshifts help explain matter distribution and weak lensing affects galaxy counts or sizes of galaxies, and including both into data analyses is crucial to mapping matter density in the cosmos.

While the DES work is wrapping up, it is also a launching point for more detailed surveys that will help scientists better understand the makeup, origins, and evolution of the universe.

“It’s a very exciting time to be a grad student in the field,” Beas-González says. “I get to see the final stages of DES, and I feel like there’s this whole generation of young scientists like myself that are excited to collaborate on newer projects, see them to their final stages, and get even better and more constrained results.”

More information on the DES collaboration and the funding for this project can be found here.

The Dark Energy Survey is jointly supported by the U.S. Department of Energy’s Office of Science and the U.S. National Science Foundation.

Velocity gradients key to explaining large-scale magnetic field structure

All celestial bodies — planets, suns, even entire galaxies — produce magnetic fields, affecting such cosmic processes as the solar wind, high-energy particle transport, and galaxy formation. Small-scale magnetic fields are generally turbulent and chaotic, yet large-scale fields are organized, a phenomenon that plasma astrophysicists have tried explaining for decades, unsuccessfully.

In a paper published January 21 in Nature, a team led by scientists at the University of Wisconsin–Madison have run complex numerical simulations of plasma flows that, while leading to turbulence, also develop structured flows due to the formation of large-scale jets. From their simulations, the team has identified a new mechanism to describe the generation of magnetic fields that can be broadly applied, and has implications ranging from space weather to multimessenger astrophysics.

“Magnetic fields across the cosmos are large-scale and ordered, but our understanding of how these fields are generated is that they come from some kind of turbulent motion,” says the study’s lead author Bindesh Tripathi, a former UW–Madison physics graduate student and current postdoctoral researcher at Columbia University. “Given that turbulence is known to be a destructive agent, the question remains, how does it create a constructive, large-scale field?”

Before working on three-dimensional (3D) magnetic fields, Tripathi investigated systems with hydrodynamic flows and two-dimensional (2D) magnetic fields. After staring at the movies and images of 3D magnetic turbulence, he noticed similarities in the shapes of large-scale flows and large-scale magnetic field structures. But it wasn’t as simple as applying fluid dynamic theory to magnetic field generation: the former may be solved as a 2D problem, whereas the latter must be solved in 3D, making it a much more complex, difficult-to-solve problem.

Tripathi and his colleagues decided to tackle the problem with two key changes from previous research.

The first difference was the input: a constantly replenished velocity gradient. A cyclist hitting a curb head-on, say, experiences a velocity gradient: the wheels stop, but momentum can cause the cyclist to fly over the handlebars. Velocity gradients exist throughout the universe; for example, within different layers of the sun or when two neutron stars merge. The team reasoned that this gradient is likely important to include while studying 3D magnetic fields.

Second, they ran perhaps the most complex simulation to date of magnetic fields in the presence of an unstable velocity gradient — 137 billion grid points in 3D space. Altogether, they ran around 90 simulations, generating 0.25 petabytes of data and using nearly 100 million CPU hours on the Anvil supercomputer at Purdue University.

Ordered magnetic fields spontaneously emerge out of chaotic, tangled fields. This finding is consistent with astrophysical observations. Streamlines of magnetic fields are 3D-rendered and are colored red–blue by the x-component of the field. Streamlines of the electric current density are shown in green; color represents magnitude. Poloidal fields are displayed on the (y,z)-plane, after averaging them over the azimuthal (x) direction. Credit: Tripathi et al.

“We start our simulations with a flow that has a velocity gradient, then we add some tiny perturbations, like moving one fluid particle infinitesimally, we let that perturbation propagate over the system and grow, and then analyze the data over time,” Tripathi says. “Initially, these perturbations lead to turbulent flows and magnetic fields in small-scale structures, then, over time, they emerge into larger, ordered structures.”

When Tripathi ran the same simulations where the initial velocity gradient had decayed over time, the simulation only produced the chaotic, small-scale patterns. “So that’s really the main key: to have a steady, large-scale gradient in velocity,” he emphasizes.

Adds Paul Terry, physics professor at UW–Madison and senior author of the study: “Magnetic field generation via dynamos has been extensively studied for 70 years, with the frustrating result that the generated fields almost always end up at small scales and highly disordered, unlike observations. This work, therefore, potentially resolves a long-standing issue.”

Though the theory cannot be tested in the distant universe, a lab-based experiment does support the team’s findings: in 2012, colleagues at the Wisconsin Plasma Physics Laboratory were trying to better understand the nature of the magnetic field generation process in a laboratory experiment, but their data did not fit any of the previous models. Tripathi and colleagues’ new theory of magnetic field generation more closely matches the experimental data and helps to resolve the confounding findings.

“This work has the potential to explain the magnetic dynamics relevant in, for example, neutron star mergers and black hole formation, with direct applications to multimessenger astronomy,” Tripathi says. “It may also help better understand stellar magnetic fields and predict gas ejections from the sun toward the earth.”

Top image: The magnetic fields in large-scale structures are organized despite local areas of turbulence. The magnetic field in the Whirlpool Galaxy (M51), captured by NASA’s flying Stratospheric Observatory for Infrared Astronomy (SOFIA) observatory superimposed on a Hubble telescope picture of the galaxy. The image shows infrared images of grains of dust in the M51 galaxy. Their magnetic orientation largely follows the spiral shape of the galaxy, but it is also being pulled in the direction of the neighboring galaxy at the right of the frame. (Credit: NASA, SOFIA, HAWC+, Alejandro S. Borlaff; JPL-Caltech, ESA, Hubble)

This work was supported by the National Science Foundation (2409206) and U.S. Department of Energy (DE-SC0022257) through the DOE/NSF Partnership in Basic Plasma Science and Engineering. Anvil at Purdue University was used through allocation TG-PHY130027 from the Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support (ACCESS) program, which is supported by National Science Foundation (2138259, 2138286, 2138307, 2137603 and 2138296).

Gage Erwin named DOE Computational Science Graduate Fellow

This post is adapted from the DOE’s announcement regarding the Computational Science Fellows

Congrats to physics PhD student Gage Erwin on being named a U.S. Department of Energy Computational Science Graduate Fellow!

The 2025-2026 incoming fellows will learn to apply high-performance computing (HPC) to research in disciplines including machine learning, quantum computing, chemistry, astrophysics, computational biology, energy, engineering and applied mathematics.

The program, established in 1991 and funded by the DOE’s Office of Science and the National Nuclear Security Administration (NNSA), trains top leaders in computational science.

“We are so pleased to congratulate the 30 new fellows,” said Ceren Susut, Associate Director of Science for DOE’s Advanced Scientific Computing Research program. “Each of these incredibly talented people has demonstrated both outstanding academic achievement and tremendous research potential. Their research topics cover some of the highest priorities of the Department of Energy, including quantum computing, artificial intelligence, and science and engineering for energy and nuclear security.”

Fellows receive support that includes a stipend, tuition, and fees, and an annual academic allowance. Renewable for up to four years, the fellowship is guided by a comprehensive program of study that requires focused coursework in science and engineering, computer science, applied mathematics and HPC. It also includes a three-month practicum at one of 22 DOE-approved sites across the country, and an annual meeting where fellows present their research in poster and talk formats.

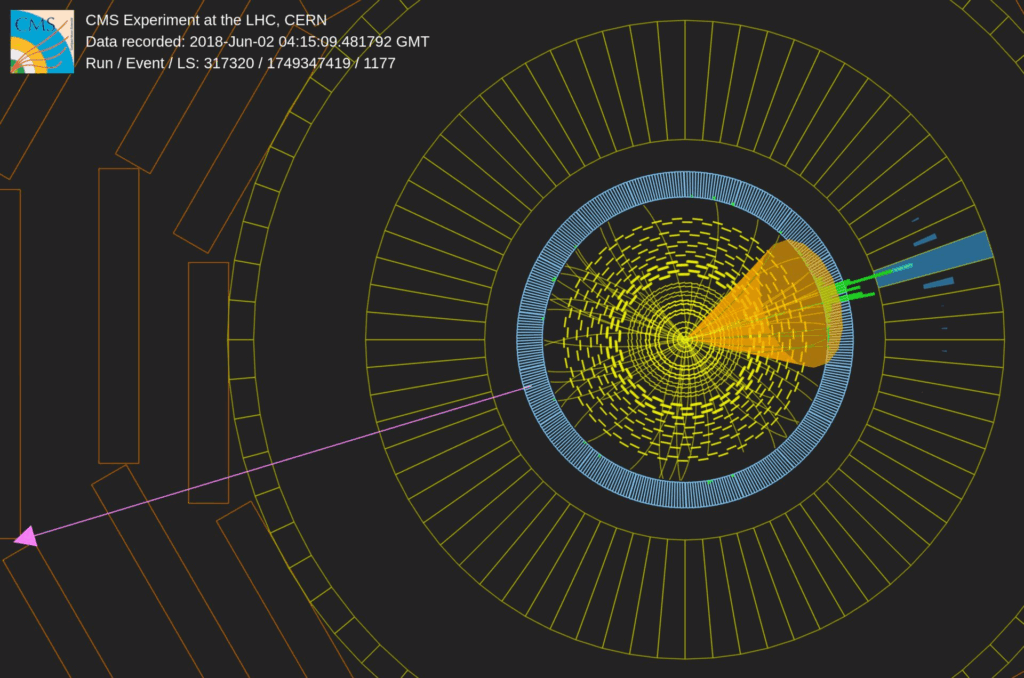

Search for boosted Higgs advances our understanding of dark matter

This story, featuring physics graduate student Shivani Lomte, was originally published by the CMS collaboration

The CMS Collaboration hunts for Higgs bosons recoiling against dark matter particles

Dark matter is one of the most perplexing mysteries of our universe, accounting for roughly 27% of its total energy. Dark matter does not emit, absorb, or reflect light, and is thus invisible to telescopes. However, its effects on gravitation are unmistakable. Although dark matter’s elementary nature remains unknown, scientists hypothesize that it might be made up of weakly interacting massive particles (WIMPs) that rarely interact with ordinary matter.

In the CMS experiment, we use the fundamental law of momentum conservation to infer the possible presence of dark matter in the detector. In particular the momentum in the transverse plane should be conserved before and after the proton-proton (pp) collision – in other words, the sum of all particle momenta combined should balance out. If momentum is missing, then this suggests that an ‘invisible’ particle, for instance a dark matter particle, has carried that momentum away. Since dark matter doesn’t interact with the detectors, we can’t directly observe it. To detect its presence, we use a ‘visible’ known particle that recoils against the dark matter particle, providing a detectable signal in the experiment. An example of this type of process is shown in Fig. 1.

In pp collisions, a photon, ‘jet’, W or Z boson can be emitted from the initial quark within the proton, whereas radiating a Higgs boson is extremely rare given its small coupling to the quarks. Higgs bosons might be preferentially emitted through a new particle acting as a ‘mediator’ between the standard model and dark matter sector. There is a unique possibility at LHC to produce the mediator particle and study its interaction with the standard model and dark matter.

This analysis uses the “mono-Higgs” signature to search for dark matter particles, focusing on two scenarios that both involve Higgs bosons decaying to bottom quarks. If the Higgs boson is highly energetic (boosted), its decay products become collimated and can be reconstructed in a single large-radius ‘jet’. Alternatively, if the Higgs is not as energetic, we instead look for two small-radius jets, one from each bottom quark. The two scenarios are illustrated in Fig. 2.

“A key challenge in this search is that the dark matter signal is rare (at best) and well-known processes, as described in the standard model, produce very similar signatures. To reduce the backgrounds from known particles, we use distinguishing features like the momentum and energy distribution of the particles” says Shivani Lomte, a graduate student at the University of Wisconsin-Madison, leading this search. The precise estimation of the background is critical and is achieved using so called control regions in the data. Such control regions are dominated by background processes and this allows us to quantify the amount of backgrounds in the signal region where we search for dark matter.

In this analysis, once the backgrounds were well-understood, we looked for the dark matter signal by comparing the observed data distributions to the predicted backgrounds, looking for discrepancies. Unfortunately, the observed data agrees with the standard model predictions, and so we conclude that our result has no sign of dark matter. We can thus rule out those types of dark matter particles that would have been detected if they existed.

Regardless of the outcome, the search for dark matter is a journey that pushes the boundaries of human knowledge. Each step brings us closer to answering some of the most profound questions about the nature of the universe and our place within it.

Three grad students recognized as L&S Teaching Mentors

Physics PhD students Sam Kramer, Michelle Marrero Garcia, and Isaac Barnhill were recently named to the L&S Teaching Mentors program. The L&S Teaching Mentors are the heart of L&S’s Teaching Assistant (TA) Trainings. They are exceptionally passionate and knowledgeable teachers with proven track records for teaching excellence who work closely with the L&S TA Training and Support Team to facilitate various trainings and mentor L&S TAs.

Kramer and Marrero Garcia earned Lead Teaching Mentor designation, meaning that they have served as Teaching Mentors more than once and are taking on an additional leadership role within the program.

Learn more about the three Physics Teaching Mentors:

Isaac Barnhill, Teaching Mentor

Isaac began teaching as a peer mentor tutor in the UW Physics Learning Center during undergraduate studies. Now a PhD student in the Physics Department, Isaac has primarily taught electromagnetism, circuits, and optics at the introductory level. Isaac’s research is focused on increasing student agency and decision making in the laboratory component of their physics classes. By shifting the focus of lab activities from content reinforcement to engaging in authentic scientific practices, Isaac hopes to increase students’ sense of engagement and intellectual ownership in the classroom while simultaneously helping students build their data literacy and critical thinking skills. One of his favorite aspects of teaching is seeing students improve their ability to understand, describe, and predict the physical world around them. He always seeks to center the student by promoting active learning in the classroom, allowing students to work out their thoughts in an environment with both high expectations and high support.

Michelle Marrero Garcia, Lead Teaching Mentor

Michelle started teaching in her first semester of the Physics PhD program. She has taught either kinematics or electromagnetism at the introductory level (every semester since then), but she loves teaching any subject within Physics. Her favorite part is watching the face of her students light up as they explore the world through a new lens. In Michelle’s approach to teaching, she always tries to be empathic and put herself in the student’s position. She has found that having changed her field of study from mechanical engineering (as an undergrad) to physics (as a grad) gave her the ability to understand how students that are new to the subject think and feel.

Sam Kramer, Lead Teaching Mentor

Sam is a third-year Ph.D. candidate in the Department of Physics and has been teaching for Physics 202, a course for engineering major undergraduates that focuses on electricity, magnetism, and optics, since arriving in Madison. Sam also taught for a similar course as an undergraduate at Saint Louis University. In this role, he leads both discussions, which focus on problem solving, and labs, which provide hands-on experience with the concepts being taught. Physics can be an overwhelming subject, so Sam tries to distill the material into manageable chunks for the students, emphasizing the broader concepts underlying the formulas students use and drawing explicit connections between parts of the curricula. This is meant to develop the dynamic problem solving skills students need when encountering problems they have not seen before.